5 min read

5 Key Takeaways from HubSpot’s INBOUND

Boston, Massachusetts — home to Fenway Park, Freedom Trail, and some of the best seafood in the country! It’s also home to INBOUND, presented by...

Follow these 4 tips to avoid this tricky AI-enhanced scam.

Are you familiar with the term “deepfake”?

No, it’s not a schoolyard football play drawn up by Kansas City’s head coach (at least not that I’m aware of).

According to the Government Accountability Office (GAO), “a deepfake is a video, photo, or audio recording that seems real but has been manipulated with AI.”

Deepfake technology can replace faces, synthesize faces and speech, and manipulate facial expressions.

Simply put: Deepfakes can depict someone appearing to say or do something they NEVER said or did.

How does this apply to banking?

According to multiple sources, a finance worker at a multinational firm was recently tricked into transferring $25.6 million to fraudsters using deepfake technology during a video conference call.

The employee believed he was attending a video call with the company’s CFO and several other staff members – all turned out to be deepfake replicas.

A suspicious email from the company’s CFO, which mentioned the need for a secret transaction, made the employee skeptical. He initially suspected it was a phishing attempt. However, after seeing the convincing deepfake re-creations during the video call, he cast his doubts aside.

What’s frightening is that some sources say it can take as little as three seconds to record a person’s voice and replicate it using generative AI.

Scary, huh?

Fortunately, Chris Snider, a generative AI expert and professor at Drake University’s School of Journalism and Mass Communication, has four pieces of advice for avoiding a deepfake scam:

1. Learn how to spot a deepfake.

“What deepfake technology tends to struggle with is the face movement … it just won’t seem quite right,” Snider said. “Maybe it’ll look like the face, but it will never really turn side to side. And you’ll only see that movement in the face – you won’t really see the shoulders move.”

Another tell is the background, Snider added.

“Sometimes the background moves a little differently than the people on screen … you’ll think, ‘that’s weird that nothing in the background ever moves.’"

Also, look out for:

2. Recognize the warning signs early and trust your gut.

“How did the conversation (the $25.6 million meeting) happen?” Snider asked. “What clues were there along the way?”

Remember, the employee acknowledged receiving a suspicious email that mentioned the need for a confidential transaction.

“I’m sure there were some breadcrumbs along the way that when put together would not have added up,” Snider said. “I think we have to put our guard up and trust our instincts a bit more.”

3. Know who you're speaking to at all times.

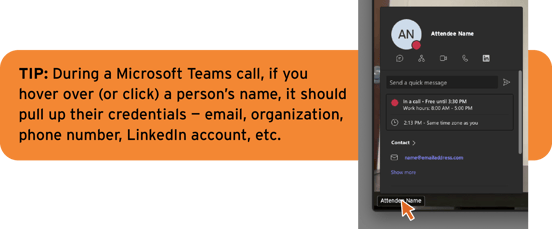

“Our Faculty Senate meetings are on Zoom, and you can only join if you’re coming from an official Drake.edu Zoom account," Snider said. "Even on (Microsoft) Teams you can usually see if the person you’re talking to is verified." (See the example to the right.)

These scams can also happen over the phone.

Fraudsters are becoming more prolific at using AI-generated voice recordings to orchestrate what’s known as the “Grandparent/Family Emergency Scam.”

According to the Federal Communications Commission (FCC), these attacks can happen when a scam artist impersonating a grandchild – or another close relative – calls a grandparent in a crisis situation, asking for immediate financial assistance to avoid something bad from happening (e.g., going to jail). The caller may sound legitimate since voices can easily be cloned using AI. Scammers can even spoof their caller ID to make an incoming call appear to be from a trusted source.

Tricky, tricky.

That’s why it's crucial to always verify the identity of the person you're speaking to. Follow these tips and communicate them with the senior members of your family:

4. Set protocols, communicate, and verify.

“I’m sure for financial institutions, moving $25 million, there’s some protocol about how this is done,” Snider said. “Just make it clear to your employees that if you’re going to do something that involves a money transaction, there’s protocol where it’s not just done on one call.”

Next, always verify your transactions. Treat your institution’s money with the same level of caution as your own.

“We have this mistrust built in when it’s our own money; we should probably do that for our company’s money as well,” Snider said.

Verification is crucial, as the fake CFO in this case was only discovered when the employee checked with the real organization's head office and learned they never received payment.

Knowing this, how are bankers supposed to sleep at night?

Snider suggests it isn’t time for financial institutions and consumers to panic.

“It seems like when it’s money involved, the government’s pretty good at getting people the money back,” Snider said. “And with these digital transactions, maybe there’s also a bit of solace in the idea that there’s probably a digital trail there that’s going to lead to you getting your money back in the end.”

So, don’t go off the grid just yet.

“We don’t need to delete all of our social media accounts and go live in the woods,” Snider said with a chuckle. “We can still live our lives even though there are going to be deepfakes out there. We can just get a little smarter about recognizing what’s real and what’s fake.”

5 min read

Boston, Massachusetts — home to Fenway Park, Freedom Trail, and some of the best seafood in the country! It’s also home to INBOUND, presented by...

3 min read

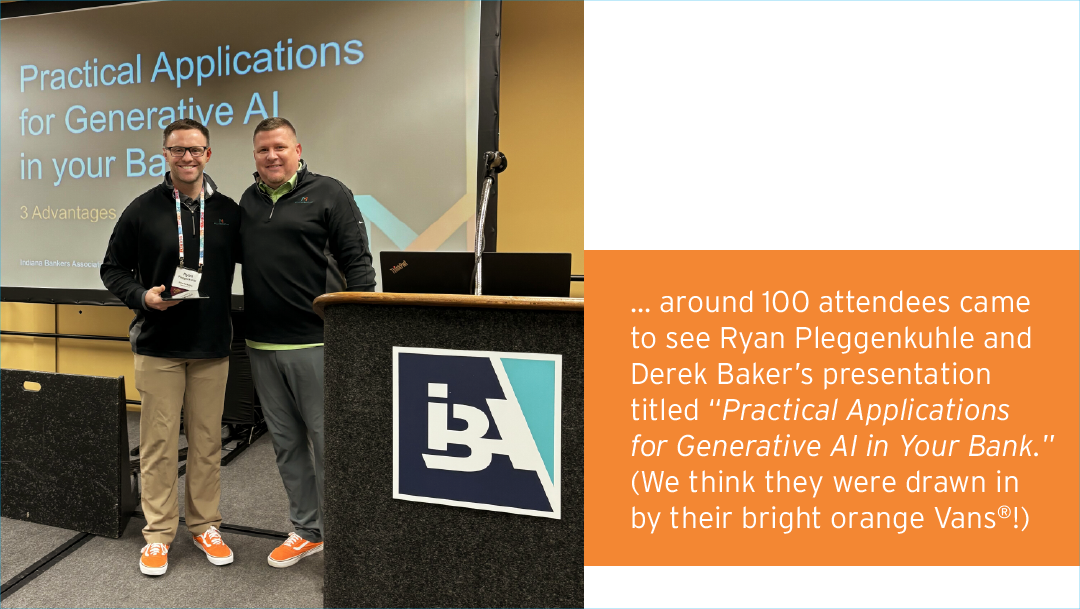

Generative AI Emerges as Major Theme at #IBAMega This Year. Earlier this month, Derek Baker and I had the opportunity to attend and present at the...

.jpg)

6 min read

Celebrating 50 Years of SMART and BOLD Financial Marketing. On June 1, 2025, Mills Marketing officially turned 50! To commemorate our milestone...